Quorai: A Multi-Agent LLM System for Investment Deliberation

Single-agent LLM reasoning has a well-documented weakness: it collapses to a single viewpoint. Given a prompt, the model produces one plausible chain of thought, and whichever interpretation it commits to early tends to shape everything that follows. This is acceptable for tasks with a clear right answer, but it is poorly suited to problems where the correct answer depends on reconciling genuinely incompatible perspectives.

I wanted to see whether a deliberative multi-agent architecture — multiple LLMs adopting distinct viewpoints and then arguing — would produce meaningfully different decisions than a single, well-prompted model. I chose retail equity trading as the substrate. Trading offers three properties that make it useful for this kind of research: a clear ground truth in subsequent price movement, a rich literature of conflicting expert philosophies that translate naturally into persona prompts, and well-understood failure modes that prevent me from fooling myself about the results.

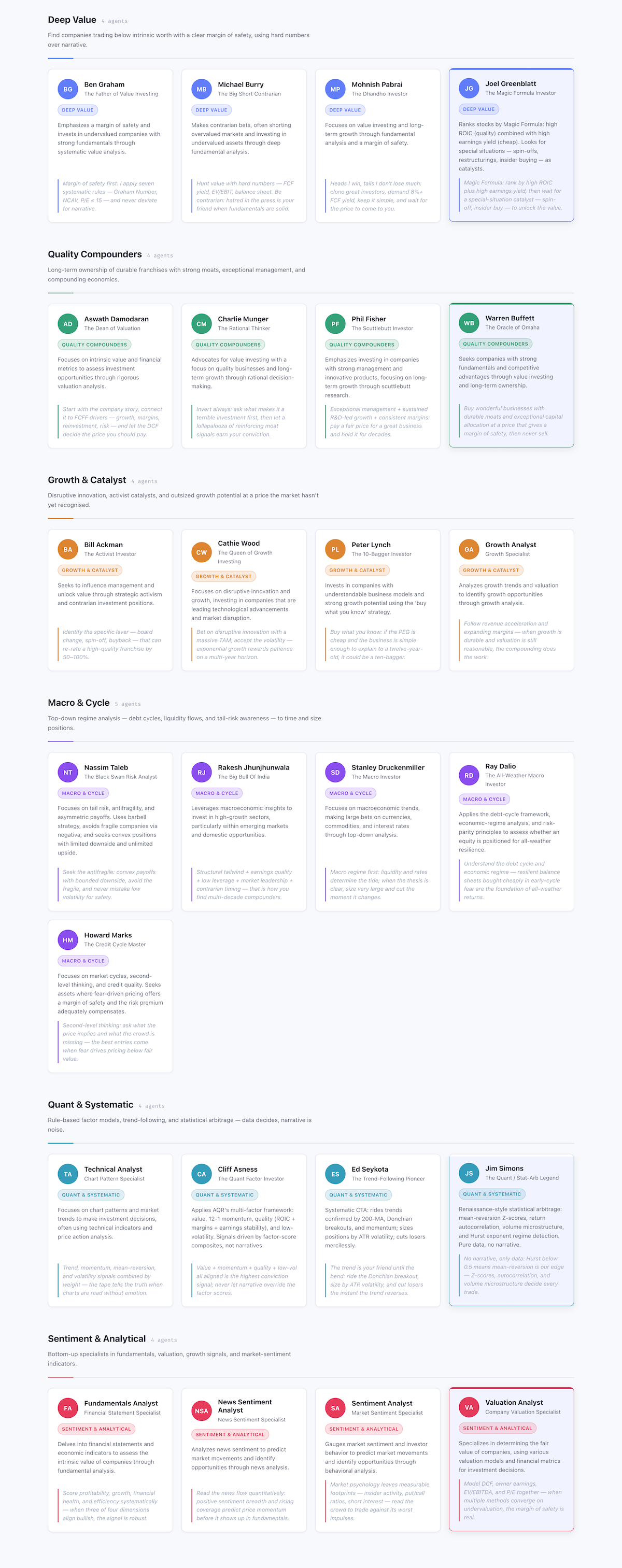

The result is Quorai — a quorum of twenty-five analyst agents (Buffett, Cathie Wood, Dalio, Simons, Burry, and others) that independently produce signals for each ticker, converge through a debate node, and pass through a portfolio manager that weighs their views against pure-math risk constraints. It is built on LangGraph, runs against multiple LLM providers interchangeably, and supports both historical backtesting and live paper trading via Alpaca.

This post is not a claim that Quorai makes money. It does not, reliably. What I want to share are the design choices that I think generalise beyond trading — in particular the role of debate nodes, the persistence of persona-driven prompting across runs, and the importance of separating LLM-suitable from LLM-unsuitable tasks within an agentic system.

Trading as a research substrate

Before going further, a disclaimer: nothing in this post should be read as investment advice. Quorai is an educational proof of concept, and the backtesting results are mixed enough that I would not trade real capital on its signals.

That said, trading is a genuinely useful substrate for this kind of research. The decision domain is well-bounded: at the end of each trading session, you take a position, the market moves, and you have a reward signal. Conflicting expert frameworks — value investing, momentum, macro, quantitative — are extensively documented and genuinely irreconcilable in many market regimes. And the failure modes (look-ahead bias, overfitting, data snooping) are well-understood, which means I can be reasonably honest about what the results mean. It is a more grounded testbed than benchmarks that lack a real-world feedback loop.

Architecture: a deliberation graph on LangGraph

The system is implemented as a LangGraph graph in which each analyst agent is a node. On each trading cycle, the following happens:

- Financial data is fetched from Finnhub and Yahoo Finance: price history, fundamental ratios, insider transactions, and news sentiment.

- Each analyst agent receives the same data and independently produces a structured output: a direction (

buy / sell / hold), a conviction score, and a written rationale. - Where agents disagree on a ticker, a debate node is triggered.

- A risk manager node applies pure-math position limits — no LLM involved.

- A portfolio manager agent receives all signals and risk limits, then issues final orders with written reasoning.

The twenty-five analyst agents fall into six groups: deep value (Buffett, Munger, Pabrai, Greenblatt, Damodaran), quality compounders (Lynch, Fisher, Jhunjhunwala), growth and catalyst (Ackman, Cathie Wood), macro and cycle (Dalio, Druckenmiller, Marks), quant and systematic (Simons, Asness, Seykota), and sentiment and analytical (Burry, Taleb, plus news and social sentiment agents).

Each agent is a persona prompt combined with a structured-output call. The persona prompt describes the investor’s stated philosophy — sourced from books, interviews, and public writings — and instructs the agent to reason only from data it has been given. No agent has internet access at inference time.

The debate node

The most interesting design decision in Quorai is the debate node, and I remain genuinely uncertain about whether it helps.

When two or more analyst agents reach opposing conclusions on the same ticker, a separate LLM call is triggered. An LLM moderator receives both rationales and the supporting data, and is asked to produce a reconciled signal: does the bull case or the bear case hold up better given the actual numbers, and where does each argument fail?

The intent is to surface the strongest counterargument to each position — something a single-agent prompt struggles to do, because the model that generated the bull case is unlikely to spontaneously produce the most persuasive bear case. In practice, the debate moderator does catch weak arguments. I have observed runs where a bullish agent cited momentum from a period with obvious earnings distortions, and the debate node correctly identified the distortion and weighted the bear case higher. Whether this improves portfolio performance is less clear — which I will return to.

A short example trace (paraphrased for brevity):

Buffett agent: AAPL — buy. Free cash flow yield is compelling relative to ten-year treasuries. Balance sheet is conservative. Brand moat intact.

Druckenmiller agent: AAPL — sell. Macro regime is unfavourable. Dollar strength compresses international revenue. Multiple has not contracted to reflect the rate environment.

Debate moderator: Buffett’s free cash flow case is sound but assumes a stable multiple. Druckenmiller’s macro argument is the more proximate risk. Resolved: hold, with reduced conviction.

The portfolio manager receives this resolved signal rather than the raw disagreement.

Risk management as pure mathematics

One of the clearer conclusions from building Quorai is that not every node in an agentic system should involve an LLM. The risk manager is a pure-math node: it computes annualised volatility per ticker, correlation between tickers, and applies a maximum-position-size constraint that scales inversely with volatility and adjusts for correlation clustering. No language model is involved.

This was a deliberate choice. LLMs are good at reasoning about qualitative frameworks but have a documented tendency to produce confident-sounding numbers from thin air when asked for quantitative calculations. Routing risk management through a deterministic function gives the system one component whose outputs I can audit without needing to interpret natural language.

The useful design principle, generalised: delegate to an LLM where the task requires judgment about ambiguous natural-language evidence; use code where the task has a well-defined mathematical formulation.

What surprised me

Agent disagreement is itself informative. When all twenty-five agents converge on a signal, that consensus tells you something. When they split thirteen to twelve, the split is also information — the system is saying that the case is genuinely ambiguous given available data, which is often true. I started logging the conviction-score distribution across agents, and this turned out to be more useful than the majority vote alone.

Persona prompts are surprisingly stable. I had expected persona prompts to produce superficially different phrasings of the same underlying model output. In practice, the agents produce systematically different signals in the same market conditions. Buffett agents consistently underweight momentum; Druckenmiller agents consistently flag macro regime changes. Whether this reflects something genuine about the philosophies or is an artefact of prompt engineering, I cannot determine — but the character persists across hundreds of runs.

The portfolio manager’s reasoning frequently adds value. I had assumed the portfolio manager would simply be a weighted average of agent signals. In practice, the LLM call at that stage often catches inconsistencies that the individual analyst prompts missed — for example, noting that two bullish signals rest on contradictory premises about the interest rate environment, and downweighting both.

Token cost scales badly. Twenty-five agents × N tickers × M trading days equals a large number of API calls. For a modest backtest of two tickers over one month, I was making several thousand calls. The cheapest model that produces useful outputs brings this to a manageable cost, but it is a real constraint on backtest window length.

What does not work yet

The backtest results are mixed, and I want to be direct about that.

The system produces plausible reasoning chains. The agents argue in character. The portfolio manager writes coherent memos. But plausible reasoning does not imply profitable trading, and there is a more fundamental issue: any LLM trained on data up to a recent cutoff has effectively seen outcomes it is being asked to predict. The degree to which this creates look-ahead bias in backtesting is difficult to quantify and probably non-trivial.

I am publishing Quorai despite this because I think the interesting contribution is the multi-agent deliberation architecture, not the trading results. If you are reading this hoping to deploy it with real capital, I would encourage you not to. If you are reading it because you are thinking about how to structure disagreement and reconciliation in an agentic system, there may be something useful here.

Stack and reproducibility

Quorai is built on LangGraph and LangChain, and supports multiple LLM providers interchangeably: OpenAI, Anthropic, Groq, Google Gemini, DeepSeek, xAI, and OpenRouter. Market data comes from Yahoo Finance and Finnhub. Live paper trading uses the Alpaca API.

To run a backtest:

uv sync

uv run python -m src.backtesting \

--tickers AAPL,MSFT \

--model deepseek/deepseek-chat \

--model-provider OpenRouter \

--show-reasoningFull setup instructions are in the GitHub repository. The project page has an interactive agent gallery and a diagram of the full deliberation graph.

What is next

I am currently exploring two directions: a structured feedback loop that logs which analyst agents were right and wrong over rolling windows and adjusts their conviction weights accordingly; and a leaner architecture that selects a subset of agents by market regime, reducing token cost while retaining coverage of the relevant investment frameworks.

If you find Quorai interesting, the repository is on GitHub. Contributions — new analyst personas, data sources, or backtesting improvements — are welcome via pull request.

Quorai builds on virattt/ai-hedge-fund for the persona-agent architecture and LLM prompt patterns, and on TauricResearch/TradingAgents for the bull/bear debate concept. Both are worth reading.